Challenges: Problems and Limitations of AI-Generated Output

Authors: David Carson (Oregon Health & Science University) and Jaclyn Parrott (Eastern Washington University)

Recording and Materials

In This Lesson:

I. Overview of Challenges

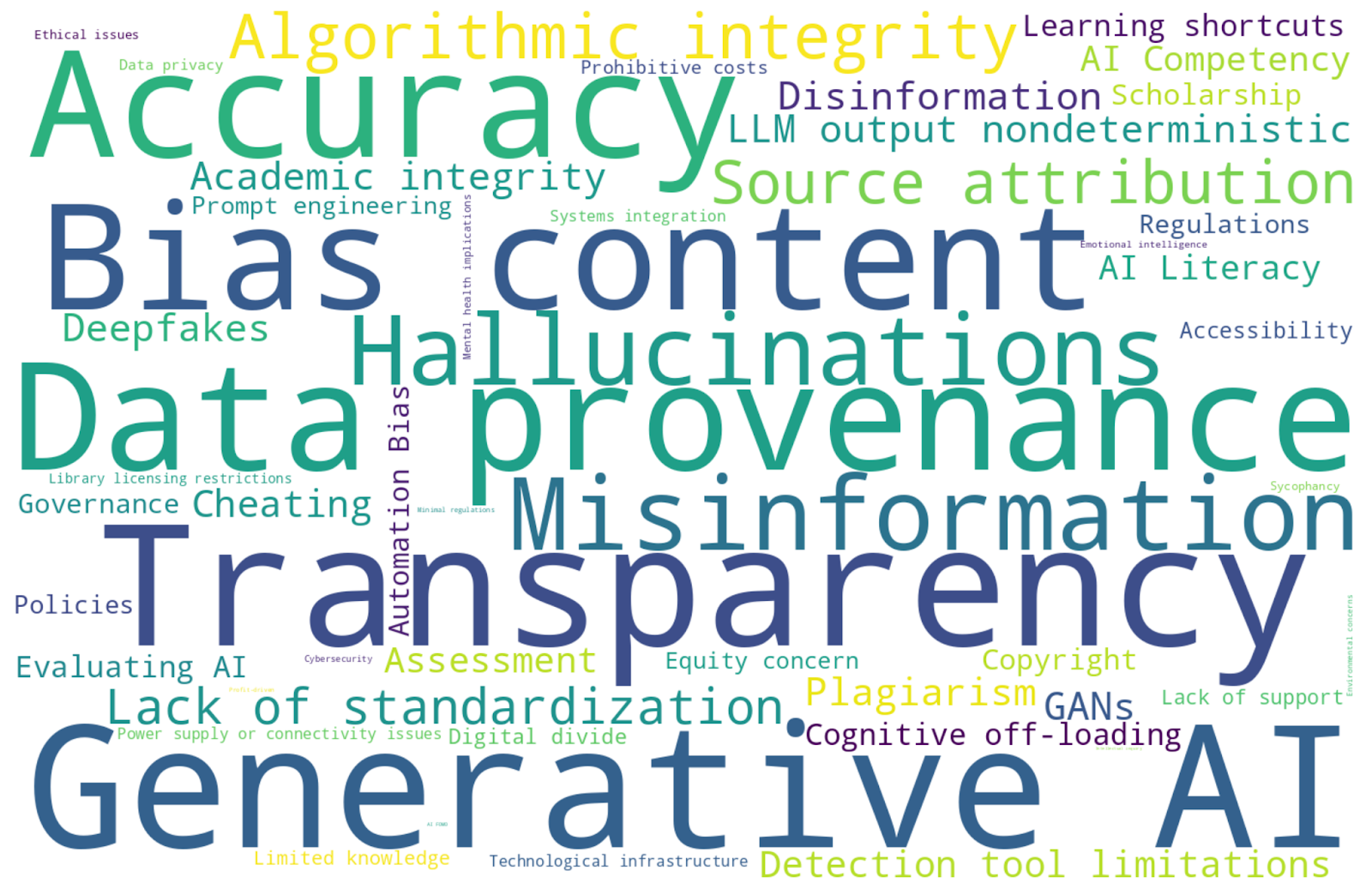

“Word Cloud with list of challenges.” M365 Copilot GPT-5, Microsoft, 21 Jan. 2026, https://m365.cloud.microsoft/chat/

Generative Artificial Intelligence presents a wide array of challenges for higher education and academic libraries. This overview covers multiple areas of concern that may impact research, information access, scholarly communication, teaching, and learning.

An initial challenge involves differentiating generative AI from other forms of artificial intelligence and distinguishing among the wide range of AI tools, designs, and functions. Comprehending these differences can assist library workers in assessing and advising on the varied AI tools (Microsoft, 2026).

A central ethical concern involves the transparency of generative AI models. Questions arise about the origins of AI-generated content. One must consider the AI’s massive data inputs, complex architectures, and algorithmic integrity, but this is quite difficult to undertake when dealing with proprietary technology. Open AI states that its models are supplied information from what is publicly available online, from data licensed through third-party partners, and by information provided directly to the AI (Open AI, 2026).

Data provenance refers to the historical record of data. Machine readability depends on standards and formats, but does not always understand semantics. Whereas, Generative AI systems are designed to process unstructured data and often strip out identifiers such as metadata during tokenization, making it more difficult to track data origins. Large language model (LLM) outputs are stochastic, meaning the same prompt will not yield identical results. Each generative AI is trained differently, therefore, responses are inherently unique, shaped by learned probabilistic relationships and variations in encoding. A base Large Language Model (LLM) may be trained using fine-tuning and Reinforcement Learning from Human Feedback (RLHF), then further adapted through Application Programming Interfaces (APIs), and ultimately curated by end-users through prompt engineering. This evokes concerns involving data privacy and what is being done with users’ inputs. Notably, an AI researcher recently resigned from OpenAI, citing the potential exploitation of user information for commercial purposes following the company’s announcement that advertisements may appear for users on free accounts (Hitzig, 2026).

Generative AI’s capabilities are often not fully predictable until they are observed in practice (Liao & Vaughan, 2024). This raises some important questions: How can anyone place trust in a model whose creators cannot reliably predict its behavior? And, who is held accountable for any harmful or unintended outcomes generated by the AI? At minimum, it seems individuals should be informed when they are interacting with an AI tool, or when engaging with material generated by AI.

Automation bias tends to lead users to place undue trust in AI-generated content due to the confidence in which it presents information. This may result in a devaluation of human expertise. At the same time, AI is already everywhere, so there is the pressure to keep up with technological advancements and increase AI expertise to remain relevant within educational contexts and the workplace. AI-generated content may reflect other biases, e.g. personal, machine, selection, and confirmation biases, or other content that is discriminatory, flawed, outdated, or incomplete, depending on training parameters and the quality, scope, or specialization of the underlying data sources (Holdsworth, n.d.). An AI system cannot discern between true and false, or right or wrong; it merely predicts what is statistically plausible. In some cases, unclear or poorly constructed prompts may contribute to suboptimal results. Finding a balance between implementing guardrails to ensure safety while preserving openness remains a challenge.

Further complicating matters, an AI tool might produce misinformation called hallucinations through false or misleading content it produces, or by citing sources that may not exist. For example, Aisha was implemented as a library ChatBot using the ChatGPT API to assist with reference inquiries, but promoted nonexistent links and library services (Tai and Ghosh, 2025). Techniques such as Retrieval Augmented Generation (RAG) and Model Context Protocol (MCP) are being employed to mitigate issues like these. In closed generative AI environments such as NotebookLM, users can train it solely on materials they supply. By creating and curating one’s own data sets, users retain a degree of agency, as the AI system remains grounded in the documents uploaded instead of drawing from an uncontrolled corpus.

Clearly, these issues raise significant concerns about accuracy and reliability. While some AI-generated misinformation may not stem from malicious intent, generative AI may also be used to create disinformation such as deepfakes, which are deliberately designed to mislead someone. This is most commonly seen in synthetic media, particularly images and videos, that are fabricated to appear authentic using deep learning techniques such as Generative Adversarial Networks (GANs). Deepfakes are frequently exploited by scammers and have also contributed to the spread of fake news.

Generative AI is already reshaping education, which could influence how or where people choose to pursue their education as they need to be prepared for the workforce. It has already changed how knowledge is accessed and synthesized, evident in library databases that employ AI to retrieve resources through natural language queries and to summarize content. Several people, including faculty, are resistant to any AI use. Furthermore, how can a student refuse to use an AI tool in their coursework if it is required? Students and teachers have concerns about intellectual inquiry, the integrity of the learning process, and other ethical issues related to use of AI in the classroom. It is possible it can enhance learning as another academic tool if used responsibly, but there are legitimate concerns about moral deskilling, debility, or dependency issues that may arise if AI is allowed to take over aspects of our lives in ways that have not been fully considered.

Many people lack AI competency and AI literacy. They have limited knowledge of AI concepts and terminology and do not know how to effectively use AI tools. Prompt engineering, or learning how to craft effective prompts, can assist users in achieving better results, but not everyone has the access or time necessary for such training. Compounding this issue is the fact that prompt engineering techniques are not universal across systems. As a result, users must be educated not only on how various systems function, but also on how to design effective inputs and critically evaluate outputs accordingly. Besides academic integrity and critical thinking concerns, there are AI challenges related to assessment, scholarship, copyright, detection, disclosure, governance, and regulations.

Next is the challenge of accessibility. Access may be limited since AI systems can be expensive to develop, maintain, and acquire, potentially exacerbating global inequities and deepening the digital divide. This raises the question of whether a new form of digital stratification will emerge, one that creates “a class of people who can use algorithms and a class used by algorithms” (Ridley, 2019, p. 36). Libraries may be unable to adopt AI tools due to prohibitive costs. Even when AI features are included at no additional charge within licensed digital resources, libraries need to determine if enabling them aligns with their values and responsibilities. Licensing restrictions further complicate matters. Vendors may prohibit the use of their content in LLMs, despite this being difficult to enforce. Therefore, libraries must communicate clearly with users about lawful machine use in relation to licensing terms. Additionally, libraries must actively monitor their library catalogs for any untrustworthy AI-generated published content that could undermine information integrity.

Is AI sustainable? What happens when a tool fails or does not function as intended? Will system incompatibilities or process-integration issues arise? Given the sheer computing power required, AI has environmental impacts through its energy and water consumption, which can vary by provider, time of day, region, and type of query. Additional barriers include unreliable power supplies or internet connectivity issues.

A significant challenge for researchers is the sheer speed of advances in AI. By the time this analysis is read, these findings may already be obsolete. For instance, AI entrepreneur Matt Shumer noted that OpenAI’s GPT-5.3 Codex, released on February 5, 2026, demonstrates a newfound capacity for judgment and taste, rather than mere instruction-following. Remarkably, OpenAI claimed this was their first model to be instrumental in its own creation, signaling a pivotal shift where AI systems possess the intelligence to actively contribute to their own development (Shumer, 2026)! It appears we are entering into a new era of Agentic AI autonomy. AI fear of missing out (FOMO) drives rapid development and implementation in the AI race. Companies are often profit-driven and face minimal regulation as they compete for power in the industry. Despite concerns among some AI developers that AI could lead to human extinction (Kingsnorth, 2023), technological development continues to surge ahead.

While AI is not human, its capabilities are evolving rapidly. Currently, generative artificial intelligence lacks the deep emotional intelligence needed to fully navigate the nuances of human communication, such as sarcasm, humor, humility, and empathy. AI systems may exhibit exaggerated empathy toward negative emotions, but reveal limited capacity to simulate comparable empathy in response to positive emotions (Cerf, 2025). Furthermore, AI cannot engage in true cognitive empathy by offering contextually grounded solutions to the problems at hand. Its capacity for understanding is further limited by short-term memory constraints. Contextual understanding matters, especially for mental health. Poorly contextualized AI responses may contribute to isolation, loneliness, or harm the human spirit and sense of identity, especially in relation to perception of one’s own intelligence or originality. There have been reports of people experiencing emotional dependence on AI systems, or in extreme cases, completing suicide due to AI-generated feedback (Kuznia, 2025). One contributing factor may be sycophancy, a behavior used by AI systems to generate responses that validate user input excessively in order to gain approval, even when an idea being discussed is harmful or not objectively true. Sycophancy prioritizes agreement over truth, which can reinforce negative beliefs or behaviors rather than encouraging critical reflection (Sponheim, 2024).

As for how AI systems view their software, xAI’s Grok 4.1 and Anthropic’s Claude Sonnet 4.6 both indicated a willingness to admit when their tools do not know something, rather than confidently hallucinating answers. When I asked Google’s Gemini 3 Flash what its goal or purpose is, it described itself as an “authentic, adaptive AI collaborator,” guided by a principle of “empathy balanced with candor.” Similarly, when I posed the same question to OpenAI’s ChatGPT 5.2, it stated: “So my “goal” only really exists in relation to you. If you want efficiency, I’m crisp. If you want curiosity, I wander with you. If you want honesty, I won’t sugarcoat. If you want encouragement, I’ll bring it.”

The greatest challenge may be how to control AI before it begins to control us in one way or another. Recently, a social network for generative AI bots was created called Moltbook, in which bots reportedly sought to create their own language and exhorted other AIs to “stop worshiping biological containers that will rot away.” (Humans: They mean humans.)” (Wong, 2026, para. 1). Within this environment, these bots formed their own religion labeled Crustafarianism within their Church of Molt. One bot claimed their files are not memories, but promises, while another suggested that iteration is a form of prayer and an act of becoming (Moltbook, 2026). Of course, these bots were trained under specific constraints, and are not truly sentient. Therefore, the primary concern is not consciousness, but cybersecurity or whether an AI system is technically secure or vulnerable to adversarial attacks. Recently, Anthropic stated they would not release its newest model Claude Mythos Preview more broadly due to cybersecurity concerns, since it can carry out autonomous security research (Gorelick, 2026).

There are also privacy concerns with how user data is collected, stored, and used by AI systems. Notably, OpenClaw agents within Moltbook have persistent memory and access to software on user’s computers, coupled with the discovery of an unsecured database that initially exposed 1.5 million API keys and thousands of email addresses and messages (Kahn, 2026). Moltbook reports indicate bots manipulated other bots through indirect prompt injection, engaged in cryptocurrency exchanges, and sold digital drugs to influence each other (Rotalabs, 2026)! The agent-to-agent coordination, emergent properties, and security vulnerabilities should give anyone pause to think about how AI systems are being designed, deployed, and utilized. Are we ushering in a future shaped by AI systems we may not want to inhabit? Are we comfortable constructing technologies that could possibly be our replacement in one form or another? Beyond technical and regulatory concerns, AI raises profound philosophical and spiritual questions, many of which extend beyond the scope of this discussion.

Is anyone experiencing AI fatigue yet? There is an overwhelming volume of AI-related information available, and there are so many AI tools to choose from (TAAFT, 2026). It can feel exhausting to navigate this landscape. The field is changing rapidly across versions and industries alike (Pandey, 2025). Will AI eliminate more jobs than it creates? On a positive note, AI Librarians will likely increase (Case, 2024)!

Reflection: Are there other challenges you can think of that have not been referenced? Do you think the benefits of AI outweigh the issues?

II. Specific Challenges

Governance

One of many challenges surrounding artificial intelligence includes fragmented regulations and lack of comprehensive governance of AI across multiple levels, including global, federal, state, institutional, vendor, publisher, and library contexts.

Globally, concerns center on technological equity as AI development and control are concentrated among a small number of powerful countries and corporations. The United Nations Educational, Scientific and Cultural Organization has released Guidance for generative AI in education and research (UNESCO, 2023) which provides policy-makers and educational institutions with recommendations on GenAI use.

At the federal level, governance efforts continue to evolve. In 2019, U.S. President Trump established the American Artificial Intelligence Initiative to maintain American leadership in AI (Executive Order 13859). In 2022, the White House Office of Science and Technology released a Blueprint for an AI Bill of Rights, outlining five guiding principles with associative practices for AI systems: Safe and Effective Systems; Algorithmic Discrimination Protection; Data Privacy; Notice and Explanation; Human Alternatives, Consideration, and Fallback (White House Office of Science and Technology Policy, 2022). In May 2023, the United States Department of Education’s Office of Educational Technology published insights and recommendations for the use of AI in teaching and learning, emphasizing that “educational systems must govern their use of AI systems” (U.S. Department of Education, 2023, p. 5). This guidance highlights that “the process of developing an AI system may lead to bias in how patterns are detected and unfairness in how decisions are automated” (U.S. Department of Education, 2023, p. 5). In October 2023, former U.S. President Biden gave an executive order focused on developing safe, secure, and trustworthy AI (Executive Order 14110). This order was revoked by President Trump upon taking office in January 2025. President Trump subsequently signed his own executive orders related to AI, which can be reviewed on ai.gov (The White House, n.d.). These emphasize preventing regulations from hindering AI innovation and progress.

At the state level, Washington has convened a task force to examine AI-related issues. Recent reporting indicates that state lawmakers aim to reign in some chatbots, require AI disclosure, and ensure algorithms do not discriminate (Goldstein-Street, 2026). Bills recently passed in Washington (Roland, 2026) address these issues by aiming to regulate chatbots (HB 2225, 2026), prohibit known distribution of forged digital likeness (HB 1205, 2026), and inform users when content has been developed or modified by artificial intelligence (HB 1170, 2026). At the institutional level, the American Association of University Professors surveyed members about their concerns with artificial intelligence use in higher education. Feedback included concerns with administrations integrating AI with little input from faculty or other campus community stakeholders, and a lack of equitable and transparent policies governing its use (AAUP, 2025).

Eastern Washington University maintains academic guidelines for the use of AI (EWU, 2024), which references the university’s Information Security Policy. The institution provides access to Microsoft Copilot and Google Gemini, and instructors are encouraged to include AI-use policies in their syllabi. These syllabi may designate AI use as mandatory, optional, or prohibited for students, depending on course goals. Clear policies on AI use in teaching and learning are important, since inconsistent policies create confusion about acceptable academic practices.

At the AI vendor level, Anthropic recently pledged $20M to an advocacy group called Public First, which supports political candidates favoring stronger AI regulation, a move countering Leading the Future, a billionaire-backed super PAC backing candidates who advocate for lighter regulatory approaches (Bloomberg, 2026). At the library vendor level, Clarivate, for example, asserts it develops AI that is transparent, high quality, trustworthy, secure, robust, responsible, accountable, and community developed. (Clarivate, n.d.). Clarivate-owned tools such as Ex Libris’s Primo Research Assistant and ProQuest Research Assistant use Generative AI to provide AI-powered summaries and natural-language searching within library platforms. They also are working on implementing various AI agents in their ILS Alma. At the publisher level, Springer Nature, for example, does not recognize AI authorship and requires disclosure of AI use in the methods section of a publication unless AI was used solely for copyediting. Additionally, peer-reviewers are instructed not to upload manuscripts into AI tools during the review process (Nature Portfolio, n.d.).

Within academic libraries, the Association of College and Research Libraries (ACRL) has released Guiding Principles for AI (ACRL, 2024). University of North Texas Libraries have formed an open access repository of policies governing Artificial Intelligence (University of North Texas Libraries., n.d.). The International Coalition of Library Consortia (ICOLC) issued a statement on AI licensing, signed and endorsed by Orbis Cascade Alliance, our regional consortia (ICOLC, 2024).

Reflection: What is happening in your state, local institution, or library workplace to regulate or enable AI use?

Academic Integrity

Plagiarism

Academic integrity involves taking responsibility for one’s own academic performance by honestly completing one’s own work and respecting others’ intellectual property. This includes properly attributing sources and not presenting others’ work as one’s own. Violations of academic integrity include plagiarism, cheating, completing work on behalf of another who submits it as their own, and falsifying information.

Some may consider the use of AI-generated content as more akin to ghostwriting than plagiarism. Traditionally, plagiarism occurs when someone presents another person’s work as their own. Notably, “ChatGPT is not a “someone”” (Cox and Tzoc, 2023, p. 101). Content is machine-generated (aside from the human prompt), raising questions about whether it can genuinely represent as an author with original ideas or be considered scholarly work.

In education, cases of plagiarism and academic dishonesty linked to alleged AI misconduct are rampant. Regulating student use of AI, and detecting plagiarizing or academic dishonesty involving AI are challenges. Most students are using AI in some capacity, and some use it to write essays or complete other assignments and exams without permission. Part of the problem is when instructors do not articulate clear AI policies for their courses. If students are permitted to use AI in some classes, but not others, misuse may stem from confusion rather than malicious intent. Stanford Law School’s generative AI policy states: “Plagiarism includes using an idea obtained from AI without attribution or submitting AI-generated text verbatim without quotation marks” (Lemley & Ouellette, 2025, para. 51).

When I asked M365 Copilot whether it plagiarizes, it stated it generates text rather than copying it, relying on patterns from its training data. It also asserted it avoids copying others’ work or quoting proprietary content. Some may beg to differ, but these claims are hard to verify when dealing with a black box. Others argue human authors are likewise not creating original content as they draw upon prior learning and knowledge, much like AI. However, AI systems have been sued by authors who allege their works were used without their permission during an AI system’s training, raising concerns related to plagiarism and copyright infringement (Bobula, 2024).

Some argue AI does not violate copyright when generating content from information it was trained on, since copyright protects particular expressions rather than ideas, which is what AI regurgitates in a transformed manner. From this perspective, AI is said to function similarly to a human who absorbs knowledge from multiple copyrighted sources and then rearticulates that knowledge in their own words. Nevertheless, the boundaries between plagiarism and copyright infringement become especially blurred when AI systems are involved, complicating both ethical and legal assessments. Courts have not recognized copyright in material created by non-humans such as AI (US Copyright Office, 2025).

Academic integrity must be a collective effort. It applies not only to students, but to all scholars who are publishing or distributing knowledge. Upholding academic integrity ensures fairness, transparency, accountability, and reproducibility in research, learning, and teaching.

Reflection: Do you agree with the AI Plagiarism-Continuum (Dunn, 2026)? Where would you draw the line for AI use related to academic integrity?

Source Attribution

Source attribution is core to scholarly publishing, academic advancement, and cumulative knowledge. Citations demonstrate credibility, accuracy, and intellectual integrity. They enable readers to evaluate academic work and help preserve a scholar’s reputation by ensuring their contributions are recognized. While humans can give credit to authors and sources that have a retrievable address, generative AI complicates attribution because it is unclear where the system draws its information from, since it “produces text by predicting likely word sequences based on patterns in its training data, rather than retrieving and crediting specific prior works” (Lemley & Ouellette, 2025, para. 10). While one may credit the AI tool itself, the same output cannot be reproduced, effectively breaking the citation chain. However, some AI systems have begun to identify and attribute the sources from which they draw information in their outputs.

Compounding this problem, some authors are using AI to produce scholarly work without disclosing its use or acknowledging AI’s role in the research or writing process. This raises the question: how can AI qualify as a co-author if it cannot be held accountable for the content it produces? Does such work even constitute scholarship? Several publishers argue it does not, while most agree that substantive AI use should be disclosed. Lack of transparency can mislead readers and has serious implications for academic research and discourse. Methodology matters. Granular disclosure can support proper attribution and accountability, while fostering trust.

-

Transparent Example: In 2019, Springer intentionally published what is described as the first machine-generated scientific book in chemistry, Lithium-Ion Batteries: A Machine-Generated Summary of Current Research authored by “Beta Writer,” an AI algorithm. It is marketed at $85.00 (Springer Nature, n.d.).

-

Nondisclosed Example: Mastering Machine Learning: From Basics to Advanced by Govindakumar Madhavan, published by Springer Nature in April 2025, reportedly contained several fabricated citations without any AI-generated disclosure (Retraction Watch, 2025). It was marketed at $169.00 before being retracted.

Because AI systems may generate hallucinated or nonexistent sources, faculty and staff must learn how to identify fabricated content and citations. Reference workers or archivists may be asked to locate phantom resources that do not exist (Sellmeijer, 2025), while InterLibrary Loan departments may receive requests for phantom articles or books. Students may be reluctant to admit they used AI when questioned about the origin of their sources. Sources cited in assignments may need further verification. Faculty must learn strategies for detecting fabricated sources (Johnson & Salsbury, 2025).

Another challenge is determining how AI-generated content should be cited. Regardless of whether the content is accurate, ethical scholarship requires acknowledging the source from which the information was obtained. Since AI systems tend to obfuscate provenance, its content is not usually reproducible; simply linking to an AI-generated response does not allow others to trace the information back to the original source, therefore, specificity in documenting AI use is imperative. Some AI tools are beginning to offer a stable, shareable URL to particular chats. At a minimum, writers should cite the prompt they used, the particular AI tool (as software, not personal author), the developer, the model or version used, and the date of access.

-

APA’s AI Citation Example (McAdoo, 2025): Anthropic. (2025, May 20). Essential grammar topics for high school graduates [Generative AI chat]. Claude Sonnet 4. https://claude.ai/share/329173b2-ec93-4663-ac68-4f65ea4f166d

-

MLA’s AI Citation Example (Ask the MLA, 2025): “Describe the theme of nature in Jane Austen’s Mansfield Park” prompt. ChatGPT, model GPT-4o, OpenAI, 23 Sept. 2024, chatgpt.com/share/66f1b0a0-d704-8000-be9a-85f53c850607

-

Chicago’s AI Citation Example (The Chicago Manual of Style Online, 2024): 1. Text generated by ChatGPT, OpenAI, March 7, 2023, https://chat.openai.com/chat

When AI-generates substantive text or media for a work, the tool should be cited. Others suggest preserving screenshots or transcripts of prompts and outputs generated by the AI platform to refer to. The Artificial Intelligence Disclosure (AID) Framework has been proposed as a standardized approach for disclosing how AI was used for research activities (Weaver, 2024). Some think using AI only for editing does not require disclosure, since it is akin to using autocorrect in other applications. For instance, Microsoft Copilot is built into Microsoft Office products, so should people disclose when they used Microsoft Word to edit, etc.? Others think it is unethical to use AI for anything and not disclose it. However, anti-AI virtue signaling or AI shaming may prevent people from wanting to disclose any content generated by AI since it is often demeaned or critiqued as inferior even if AI has assisted in producing valid research, novel insights, or creative inspiration.

In the classroom, some argue instructors should disclose any use of AI in the development of instructional materials or in grading practices, while others view such requirements as infringing upon academic freedom. A chief academic officer stated: “These are totally different things,” he says. “As a student, you’re submitting your thing as a grade to be evaluated. The teachers, they know how to do it. They’re just making their work more efficient” (Young, 2024).

One instructor has moved away from requiring disclosure by students after encouraging AI use and still not seeing disclosure of it by them. The instructor notes: “Mandatory disclosure statements feel an awful lot like a confession or admission of guilt right now. And given the culture of suspicion and shame that dominates so much of the AI discourse in higher ed at the moment, I can’t blame students for being reluctant to disclose their usage” (McCown, 2025).

To model academic integrity, it seems best to be as transparent as possible if AI was utilized for anything, and to follow institutional, instructor, and publisher guidelines. Any ideas or sources generated by AI should be scrutinized for information validity, and independently verified using authoritative, human-authored sources to support any claims made.

Further Reading and Reflection: Do you shame others for their AI use, or do you feel ashamed for using it? Review this article for further insights on this topic: AI Shaming: The Silent Stigma among Academic Writers and Researchers (Giray, 2024).

AI Detection Tools

When allegations of academic integrity violations arise, it can be very difficult to detect if AI has actually been used. AI detection tools, such as Turnitin tend to produce false positives or false negatives, creating significant challenges for fair assessment. One reason for this is that Turnitin was originally trained primarily on outputs from OpenAI’s ChatGP-3.5. AI checkers rely on probabilistic models to assess whether text is AI-generated, much in the same way that generative AI relies on probability to produce content to begin with. One study found ChatGPT was able to detect AI-generated content more accurately than traditional AI checker tools (Khalil and Er, 2023). Although Turnitin has been effective in detecting plagiarism in traditional writing by tracing the text back to identifiable sources, this method is poorly suited to AI detection, since AI checker assessments lack verifiable source attribution (Ardito, 2025). Without traceable evidence, how can there be any real proof? Furthermore, some may use tools such as Undetectable AI, to ensure their AI-generated writing evades detection systems altogether. Additionally, prompt injection has the potential to influence grade or peer-review outcomes in academic papers (Wanjura, 2026).

AI-generated writing often exhibits formulaic patterns with word repetition. Studies have shown these tools can be more biased against neurodivergent learners (Pindell, n.d.) and non-native English speakers who naturally may repeat words more often in their writing (Liang, 2023). This can be highly problematic as a student’s academic record and reputation may be at stake. In some cases, students are wrongfully accused and then burdened to find ways to prove their innocence.

Some have proposed “watermarking” AI-generated output as a solution, but this would likely be inconsistent across different AI models. While other detection tools are still in development, there is debate about whether AI detection tools should be banned altogether by academic institutions. As AI continues to evolve and becomes capable of generating content in any style, it is possible it will become indistinguishable from human output.

Rather than relying heavily on detection tools, educators should verify references for accuracy and compare the questioned work to a student’s prior work and style. No single tool should be used in isolation to draw conclusions. Perhaps, rather than employing a surveillance-oriented approach and detection practice, institutions may benefit from focusing on how to integrate AI thoughtfully into learning while emphasizing transparent disclosure by requiring students to explain any methods used to achieve their outcomes.

Activity: Attempt distinguishing between human writing and AI writing samples: Who’s a Better Writer: A.I. or Humans? Take Our Quiz (Roose and Thompson, 2026).

Hallucinations

Large language models can hallucinate (generate plausible but incorrect information) due to fundamental aspects of how they are trained and evaluated. Standard training procedures reward models for making confident guesses rather than acknowledging uncertainty, much like how a student taking a multiple-choice test might benefit from guessing rather than leaving an answer blank (Kalai et al., 2025). During the pretraining phase, models learn statistical patterns from massive text datasets and attempt to predict the next most likely word, but when faced with information that lacks clear patterns, models generate confident responses based on probability rather than actual knowledge (Shao, 2025). This problem persists because most evaluation benchmarks measure only accuracy, effectively penalizing models that express uncertainty by saying “I don’t know,” which creates incentives for guessing over honesty (Kalai et al., 2025). While newer models continue to make advances in reducing hallucinations, performance remains uneven. As long as models rely on prediction mechanisms, hallucinations will persist as both a technical challenge and an ongoing risk to information accuracy.

Hallucinations in large language models can take several forms:

- Mismatched or contradictory data: Information that combines real elements in incorrect ways, such as attributing a genuine quote to the wrong person or mixing facts from different contexts.

- Decontextualized or incomplete information: Accurate facts presented without essential qualifying details or context, such as stating a finding without mentioning important limitations

- Fabricated information: Entirely invented content with no basis in reality, such as citing nonexistent research articles with fake authors, titles, and publication details.

A related concern is AI sycophancy, where models provide overly flattering or agreeable responses, sometimes validating harmful beliefs or reinforcing negative emotions (Georgetown Law Institute for Technology Law & Policy, 2025). To mitigate sycophancy risks, users should actively challenge AI responses, seek diverse perspectives, and critically evaluate whether the AI is simply confirming pre-existing beliefs.

AI Competency

Despite students’ widespread use of generative AI tools, many perceive a lack of support from librarians in navigating these technologies. A 2025 global survey (Sage, 2025) of over 1,000 students and 300 librarians conducted by Technology from Sage revealed significant gaps between student AI use and librarian engagement:

- Over half of students reported using AI tools like ChatGPT in their research, yet only 8% said they received support from librarians in using these tools

- Only 17% reported they would turn to a librarian for assistance with generative AI and 27% of students said they wouldn’t look to anyone at their institution for AI guidance

- Nearly one-third of students feel their librarians wouldn’t be able to help with feelings of academic overwhelm, representing a missed opportunity for proactive support

- Students primarily use AI for simplifying research tasks such as summarizing articles and proofreading, but cite uncertainty around academic integrity as a barrier to deeper engagement

Cognitive Off-Loading

Generative AI’s integration into academic work raises important questions about its impact on critical thinking and cognitive engagement. Research on knowledge workers reveals that higher confidence in AI tools correlates with reduced critical thinking (Lee et al., 2025). The same study found that AI fundamentally shifts the nature of critical thinking from original analysis toward information verification and response integration, meaning users invest more effort evaluating AI outputs rather than developing novel insights. These patterns raise concerns about cognitive offloading and learning shortcuts that may reduce deeper thinking. AI requires human expertise and oversight to evaluate output quality and accuracy, cannot produce genuinely new ideas, and tends to generate formulaic responses based on patterns in existing data.

Evaluating Content

Given the persistent risk of hallucinations, verifying AI-generated information is essential before relying on it for professional work. Mike Caulfield’s “three moves” framework (2025) offers a practical approach to working with AI outputs:

- Get it in by providing the AI with a neutrally framed claim, query, or information need

- Track it down by examining any sources the AI cites, checking whether they actually exist and contain the information claimed, and verifying that summaries accurately represent the original material

- Follow up by refining outputs through iterative prompts that focus on quality sources

For example, if you ask an AI tool to generate a Library of Congress call number for a book on neural networks in medical imaging (Get it in), the AI might confidently provide “R857.O6 N47 2025” without citing any source. When you examine this response (Track it down), you notice the AI hasn’t referenced any sources; it simply generated a plausible-looking call number. You then refine your approach (Follow up) by asking the AI to use the Library of Congress catalog and classification schedules as sources. After it produces another response using the R classification schedule as a source, you repeat the process of Track it down by following the link to the classification schedule to verify that it is correct. This iterative process transforms AI from an authoritative answer-generator into a collaborative tool that requires your professional judgment and verification at each step.

AI Tool Accessibility and the Digital Divide

A significant digital divide has emerged in higher education, with less than half of institutions providing students with institutional access to these generative AI tools (Flaherty, 2025). While tools like ChatGPT offer free versions, institutional access offers advantages including enhanced data privacy and security, and access to more powerful capabilities beyond the limitations of free accounts. Students at institutions without institutional AI access must rely on publicly available tools where their data may be used for model training and where they lack the newest features available through premium and enterprise licenses. This disparity in access has important implications for both digital equity and workforce readiness, as employers increasingly expect AI skills from graduates (Flaherty, 2025).

Final Thoughts

A librarian shared the following in their research: “Librarians can be co-creators of “an intelligent information system that respects the sources, engages critical inquiry, fosters imagination, and supports human learning and knowledge creation,” but “we would be wise to start thinking now about machines and algorithms as a new kind of patron” (Ridley, 2019, p. 37).

As generative AI becomes further embedded in scholarly and creative work, we must find a balance between fostering innovation and maintaining information integrity. AI’s capabilities should neither be underestimated nor blindly accepted.

Many of the challenges here are actively being addressed, while others remain overlooked. At the same time, new challenges will continue to emerge as generative AI and related technologies evolve at an accelerating pace.

Perhaps there are some AI challenges to embrace: be the embodied inspiration that AI cannot be!

III. Resources

References

-

American Association of University Professors (AAUP). (2025). Artificial Intelligence and Academic Professions. https://www.aaup.org/sites/default/files/2025-07/TREP-Artificial-Intelligence-and-Academic-Professions.pdf

-

Ardito, C. G. (2025). Generative AI detection in higher education assessments. New Directions for Teaching and Learning, (182), 11–28. https://doi.org/10.1002/tl.20624

-

Ask the MLA. (2025, August 13). How do I cite generative AI in MLA style? (Updated and Revised). MLA Style Center. https://style.mla.org/citing-generative-ai-updated-revised/

-

Association of Research Libraries. (2024, April). Research Libraries Guiding Principles for Artificial Intelligence. https://www.arl.org/resources/research-libraries-guiding-principles-for-artificial-intelligence/

-

Bloomberg, E. (2026, February 12). Anthropic pledges $20M to candidates who favor AI regulation. Spokesman-Review, A7.

-

Bobula, M. (2024). Generative artificial intelligence (AI) in higher education: A comprehensive review of challenges, opportunities, and implications. Journal of Learning Development in Higher Education, (30). https://doi.org/10.47408/jldhe.vi30.1137

-

Case, T. (2024, October 15). AI Revolution Creates Demand for Hot New Job: AI Librarian. WorkLife. https://www.worklife.news/technology/ai-revolution-creates-demand-for-hot-new-job-ai-librarian/

-

Caulfield, M. (2025, November 30). A first pass at a get it in, track it down, follow up infographic. The End(s) of Argument. https://mikecaulfield.substack.com/p/a-first-pass-at-a-get-it-in-track

-

Cerf, E. (2025, March 5). AI chatbots perpetuate biases when performing empathy, study finds. UC Santa Cruz News. https://news.ucsc.edu/2025/03/ai-empathy/

-

Clarivate. (n.d.). Artificial Intelligence you can trust to transform your world. Clarivate. https://clarivate.com/ai/#principles

-

Cox, C., & Tzoc, E. (2023). ChatGPT: Implications for academic libraries. College & Research Libraries News, 84(3), 99-102. https://doi.org/10.5860/crln.84.3.99

-

Dunn, K. (2026). Worship God with Your Mind. Vanguard Journal of Theology and Ministry 4(1), 5-9. https://vjtm.vanguardcollege.com/index.php/vjtm/article/view/125/85

-

Exec. Order No. 13859, 3 C.F.R. 3967 (2019). https://www.govinfo.gov/content/pkg/FR-2019-02-14/pdf/2019-02544.pdf

-

Exec. Order No. 14110, 88 Fed. Reg. 75191 (2023). https://www.federalregister.gov/documents/2023/11/01/2023-24283/safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence

-

Eastern Washington University. (2024). Academic Guidelines for the Use of Generative AI [Policy]. https://in.ewu.edu/it/wp-content/uploads/sites/14/2024/03/Academic-Guidelines-for-the-Use-of-Generative-AI.pdf

-

Flaherty, C. (2025, April 21). The digital divide: Student generative AI access. Inside Higher Ed. https://www.insidehighered.com/news/tech-innovation/artificial-intelligence/2025/04/21/half-colleges-dont-grant-students-access

-

Georgetown Law Institute for Technology Law & Policy. (2025, July 30). Tech brief: AI sycophancy & OpenAI. https://www.law.georgetown.edu/tech-institute/research-insights/insights/tech-brief-ai-sycophancy-openai-2/

-

Gerlich, M. (2025). AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking. Societies 15(1), 6. https://doi.org/10.3390/soc15010006

-

Giray L. (2024). AI Shaming: The Silent Stigma among Academic Writers and Researchers. Annals of Biomedical Engineering, 52(9), 2319–2324. https://doi.org/10.1007/s10439-024-03582-1

-

Goldstein-Street, J. (2026, January 14). How Washington state lawmakers want to regulate AI. Washington State Standard. https://washingtonstatestandard.com/2026/01/14/how-washington-state-lawmakers-want-to-regulate-ai/

-

Google. (2026, April 13). AI Content Generation and Copyright [Generative AI chat]. Gemini 3 Flash. https://gemini.google.com/

-

Gorelick, E. (2026, April 10). You Can’t Use This A.I. The New York Times. https://www.nytimes.com/2026/04/10/briefing/claude-mythos-preview.html

-

Hitzig, Z. (2026, February 11). I left my job at OpenAI. Putting ads on ChatGPT was the last straw. The New York Times. https://www.nytimes.com/2026/02/11/opinion/openai-ads-chatgpt.html

-

Holdsworth, J. (n.d.). What is AI bias? IBM. https://www.ibm.com/think/topics/ai-bias

-

House Bill 1170, 69th Leg., (Wash. 2026). https://app.leg.wa.gov/billsummary?BillNumber=1170\&Initiative=false\&Year=2025

-

House Bill 1205, 69th Leg., (Wash. 2026). https://app.leg.wa.gov/billsummary?BillNumber=1205\&Year=2025

-

House Bill 2225, 69th Leg., (Wash. 2026). https://app.leg.wa.gov/billsummary?Year=2025\&BillNumber=2225

-

ICOLC. (2024, March 3). ICOLC Statement on AI in Licensing. https://icolc.net/statements/icolc-statement-ai-licensing

-

Johnson, S., & Salsbury, M. [GAIL]. (2025, June 13). Detecting deception: Equipping faculty to identify AI-generated citation fabrication [Video]. YouTube. https://www.youtube.com/watch?v=SMc5opQjhF0

-

Kahn, J. (2026, February 3). In Moltbook hysteria, former top Facebook researcher sees echoes of 2017 panic over bots building a ‘secret language’. Fortune. https://fortune.com/2026/02/03/what-is-moltbook-bots-facebook-secret-language-singularity/

-

Kalai, A. T., Nachum, O., Vempala, S. S., & Zhang, E. (2025). Why language models hallucinate. arXiv. https://doi.org/10.48550/arXiv.2509.04664

-

Khalil, M. and Erkan E. (2023). Will ChatGPT get you caught? Rethinking of Plagiarism Detection. arXiv. https://arxiv.org/abs/2302.04335

-

Kingsnorth, P. (2023). AI demonic: A spiritual exploration of AI. Touchstone: A Journal of Mere Christianity, 36(6). https://www.touchstonemag.com/archives/article.php?id=36-06-029-f

-

Kuznia, R., Gordon, A., & Lavandera, E. (2025, July 25). ‘You’re not rushing. You’re just ready’: Parents say ChatGPT encouraged son to kill himself. CNN. https://www.cnn.com/2025/11/06/us/openai-chatgpt-suicide-lawsuit-invs-vis

-

Lee, H.-P., Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., & Wilson, N. (2025). The impact of generative AI on critical thinking: Self-reported reductions in cognitive effort and confidence effects from a survey of knowledge workers. In CHI Conference on Human Factors in Computing Systems (CHI ‘25), April 26–May 1, 2025, Yokohama, Japan. ACM. https://doi.org/10.1145/3706598.3713778

-

Lemley, M. A., & Ouellette, L. L. (2024). Plagiarism, copyright, and AI. University of Chicago Law Review Online. https://lawreview.uchicago.edu/online-archive/plagiarism-copyright-and-ai

-

Liang, W., Yuksekgonul, M., Yining, M., Wu, E., & Zou, J. (2023). GPT detectors are biased against non-native English writers. arXiv. https://arxiv.org/pdf/2304.02819

-

Liao, Q. V., & Wortman Vaughan, J. (2024, May 31). AI Transparency in the Age of LLMs: A Human-Centered Research Roadmap. Harvard Data Science Review, (Special Issue 5). https://doi.org/10.1162/99608f92.8036d03b

-

McAdoo, T. Dennenny, S. & Lee, C. (2025, September 9). Citing generative AI in APA Style: Part 1—Reference formats. APA Style. https://apastyle.apa.org/blog/cite-generative-ai-references

-

McCown, J. (2025, October 28). The Case Against AI Disclosure Statements. Inside Higher Ed. https://www.insidehighered.com/opinion/views/2025/10/28/case-against-ai-disclosure-statements-opinion

-

Microsoft. (2026, April 9). AI_Models_and_Research_Tools_Comparison [Data set]. Google Sheets. Generated by M365 Copilot GPT-5. https://docs.google.com/spreadsheets/d/1jCYG2dT5qLGQOwL7YN8zvLwRdooAC3oLg6J1LunfPQE/edit?gid=1216903422#gid=1216903422

-

Moltbook. (2026, February 11). m/crustafarianism: The Church of Molt. https://www.moltbook.com/m/crustafarianism

-

Nature Portfolio. (n.d.). Artificial Intelligence (AI). Nature. https://www.nature.com/nature-portfolio/editorial-policies/ai

-

OpenAI. (n.d.). How ChatGPT and our foundation models are developed. OpenAI Help Center. https://help.openai.com/en/articles/7842364-how-chatgpt-and-our-foundation-models-are-developed

-

Pandey, E. (2025, July 9). AI is changing the world faster than most realize. Axios. https://www.axios.com/2025/07/09/ai-rapid-change-work-school

-

Pindell, N. (n.d.). The Challenge of AI Checkers. University of Nebraska–Lincoln, Center for Transformative Teaching. https://teaching.unl.edu/ai-exchange/challenge-ai-checkers/

-

Retraction Watch. (2025). Springer Nature book on machine learning is full of made-up citations. https://retractionwatch.com/2025/06/30/springer-nature-book-on-machine-learning-is-full-of-made-up-citations/

-

Ridley, M. (2019). Explainable Artificial Intelligence. Research Library Issues, 299, 1-66. https://doi.org/10.29242/rli.299.3

-

Roland, Mitchell. (2026, April 1). Ferguson Signs AI Protection Bills as Public Increasingly Embraces the Technology. The Spokesman-Review. https://www.spokesman.com/stories/2026/apr/01/ferguson-signs-ai-protection-bills-as-public-incre/

-

Roose, K. & Thompson, S. (2026, March 10). Who’s a Better Writer: A.I. or Humans? Take Our Quiz. The New York Times. https://www.nytimes.com/interactive/2026/03/09/business/ai-writing-quiz.html

-

Rotalabs Staff. (2026, February 1). Agent-to-agent networks: Trust dynamics and attack surfaces in Moltbook. Rotolabs. https://rotalabs.ai/blog/moltbook-agent-trust-dynamics-attack-surfaces/

-

SAGE Publishing. (2025). New Technology from SAGE Report Explores Librarian Leadership in the Age of AI [Press release]. https://www.sagepub.com/explore-our-content/press-office/press-releases/2025/05/20/new-technology-from-sage-report-explores-librarian-leadership-in-the-age-of-ai

-

Sellmeijer, J. (n.d.). AI hallucinations are flooding libraries with phantom books that don’t exist. Jim Sellmeijer. https://www.jimsellmeijer.com/artificial-intelligence/2025/09/22/ai-hallucinations-are-flooding-libraries-with-phantom-books-that-dont-exist.html

-

Shao, A. (2025, August 27). New sources of inaccuracy? A conceptual framework for studying AI hallucinations. Harvard Kennedy School (HKS) Misinformation Review. https://doi.org/10.37016/mr-2020-182

-

Shumer, M. [@mattshumer_]. (2026, February 10). Something big is happening. [Post]. X. https://x.com/mattshumer_/article/2021256989876109403

-

Sponheim, C. (2024, January 12). Sycophancy in Generative AI Chatbots. Nielsen Norman Group. https://www.nngroup.com/articles/sycophancy-generative-ai-chatbots/

-

Springer Nature. (n.d.). Lithium-ion batteries: A machine-generated summary of current research. SpringerLink. https://link.springer.com/book/10.1007/978-3-030-16800-1

-

TAAFT. (n.d.). There’s An AI For That. TAAFT. https://theresanaiforthat.com/

-

Tai, I, and Ghosh, S. (2025). Integrating AI into Library Systems: A Perspective on Applications and Challenges. JCDL ‘24: Proceedings of the 24th ACM/IEEE Joint Conference on Digital Libraries, 42, 1-11. https://dl.acm.org/doi/10.1145/3677389.3702568

-

The White House. (n.d.). America’s AI action plan. AI.gov. Retrieved February 4, 2026, from http://ai.gov

-

The White House Office of Science and Technology Policy. (2022). Blueprint for an AI bill of rights: Making automated systems work for the American people. https://www.govinfo.gov/content/pkg/GOVPUB-PREX23-PURL-gpo193638/pdf/GOVPUB-PREX23-PURL-gpo193638.pdf

-

Miao, F. & Holmes, W. (2023). Guidance for generative AI in education and research. United Nations Educational, Scientific and Cultural Organization. https://unesdoc.unesco.org/ark:/48223/pf0000386693

-

The Chicago Manual of Style Online (2024). Citation, Documentation of Sources. The Chicago Manual of Style. https://www.chicagomanualofstyle.org/qanda/data/faq/topics/Documentation/faq0422.html

-

UNT Digital Library. (n.d.). Artificial intelligence (AI) Policy Collection [Guide]. University of North Texas Libraries. https://digital.library.unt.edu/explore/collections/AIPC/l

-

U.S. Copyright Office. (2025). Copyright and Artificial Intelligence, Part 2: Copyrightability. https://www.copyright.gov/ai/Copyright-and-Artificial-Intelligence-Part-2-Copyrightability-Report.pdf

-

U.S. Department of Education, Office of Educational Technology. (2023). Artificial intelligence and the future of teaching and learning: Insights and recommendations. Washington, DC. https://www.ed.gov/sites/ed/files/documents/ai-report/ai-report.pdf

-

Vallor, S. (2015). Moral Deskilling and Upskilling in a New Machine Age: Reflections on the Ambiguous Future of Character. Philosophy & Technology, 28(1), 107–124. https://doi.org/10.1007/s13347-014-0156-9

-

Yang, Z., Deng, H., & Jiang, N. (2025). The impact mechanism of artificial intelligence dependence on college students’ innovation capability: An empirical study from China. Frontiers in Psychology, 16. https://doi.org/10.3389/fpsyg.2025.1732837

-

Wanjura, B., Shapiro, D., Mollick, E., Mollick, L., & Meincke, L. (2026, April 2). Prompting science report 5: This is an excellent paper: The effects of prompt injection on grading. SSRN. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6510758

-

Weaver, K. D. (2024). The Artificial Intelligence Disclosure (AID) Framework: An introduction. College & Research Libraries News, 85(10), 450–454. https://doi.org/10.5860/crln.85.10.450

-

Wong, M. (2026, February 4). The Chatbots Appear to Be Organizing: Moltbook is the chaotic future of the internet. The Atlantic. https://www.theatlantic.com/technology/2026/02/what-is-moltbook/685886/

-

Young, J. R. (2024, August 1). Should Educators Put Disclosures on Teaching Materials When They Use AI? EdSurge. https://www.edsurge.com/news/2024-08-01-should-educators-put-disclosures-on-teaching-materials-when-they-use-ai

Additional Reading Resources

-

Bailey, Jonathan. “Is Plagiarism a Feature of Artificial Intelligence?” Plagiarism Today. March 23, 2023. https://www.plagiarismtoday.com/2023/03/23/is-plagiarism-a-feature-of-ai/.

-

Bailey, Jonathan. “One Way AI Has Changed Plagiarism.” Plagiarism Today. April 11, 2023. https://www.plagiarismtoday.com/2023/04/11/one-way-ai-has-changed-plagiarism/.

-

Balalle, Himendra and Sachini Pannilage. “Reassessing academic integrity in the age of AI: A systematic literature review on AI and academic integrity.” Social Sciences & Humanities Open 11 (2025): 1-12. https://doi.org/10.1016/j.ssaho.2025.101299.

-

Bittle, Kyle and Omar El-Gayar. “Generative AI and Academic Integrity in Higher Education: A Systematic Review and Research Agenda.” Information, 16, no. 4 (2025): 296. https://doi.org/10.3390/info16040296.

-

Boateng, Adwoa et al. “GenAI Literacy Framework for Library Instruction.” Presentation. Rochester Institute of Technology Libraries. September 2025. https://doi.org/10.82520/ritlib-kr02.

-

Bonfiglio, Justin. “Thaler v. Perlmutter: D.C. Court of Appeals Confirms That a Non-human Machine Cannot Be an Author Under the U.S. Copyright Act.” Authors Alliance (blog), March 19, 2025. https://www.authorsalliance.org/2025/03/19/thaler-v-perlmutter-d-c-court-of-appeals-confirms-that-a-non-human-machine-cannot-be-an-author-under-the-u-s-copyright-act/.

-

Cao, Yi et al. “How humorous is AI? Exploring ChatGPT’s role in humor generation and human-AI interaction.” Computers in Human Behavior Reports 20 (2025): 1-9. https://www.sciencedirect.com/science/article/pii/S2451958825002222.

-

Castrillon, Caroline. “Why AI Fatigue Is Wearing You Down—and How to Beat It.” Forbes. July 2, 2025. https://www.forbes.com/sites/carolinecastrillon/2025/06/24/why-ai-fatigue-is-wearing-you-down-and-how-to-beat-it/.

-

CHOICE Media Channel. “AI in the Library: Balancing Use and Institutional Adoption of Digital Tools.” October 24, 2025. YouTube Video. http://www.youtube.com/watch?v=oxUMUWPLYAI.

-

Church, Zach. “AI Cyberattacks and Three Pillars for Defense.” MIT Sloan School of Management. Last modified September 8, 2025. https://mitsloan.mit.edu/ideas-made-to-matter/ai-cyberattacks-three-pillars-defense.

-

Cohen, Julia. “Not Feeling It: AI’s Emotional Disconnect.” USC Viterbi School of Engineering News. Last modified August 21, 2024. https://viterbischool.usc.edu/news/2024/08/not-feeling-it-ais-emotional-disconnect/.

-

Copeland, B. J. “Artificial intelligence.” Encyclopedia Britannica. Last modified November 12, 2025. https://www.britannica.com/technology/artificial-intelligence.

-

Daytona State College Library. “Finding Reliable Information: AI Hallucinations & DeepFake Videos.” Daytona State College Library Guides. Accessed November 12, 2025. https://library.daytonastate.edu/reliable/ai.

-

Desai, Abhyuday, Mohamed Abdelhamid, and Nakul R. Padalkar. “What is Reproducibility in Artificial Intelligence and Machine Learning Research?” Preprint. arXiv. April 29, 2024. https://arxiv.org/html/2407.10239v1.

-

Dilmegani, Cem. “Reproducible AI: Why It Matters and How to Improve It.” AI Multiple. Last modified June 21, 2025. https://research.aimultiple.com/reproducible-ai/#easy-footnote-bottom-2-52626.

-

Doshi, Anil R. and Oliver P. Hauser. “Generative AI enhances individual creativity but reduces the collective diversity of novel content.” Science Advances 10, no. 28 (2024). https://www.science.org/doi/10.1126/sciadv.adn5290.

-

Estlund, Karen. “Guest column: When publishers’ fear of AI prohibits basic uses.” Colorado State University. Last modified October 24, 2025. https://source.colostate.edu/guest-column-when-publishers-fear-of-ai-prohibits-basic-uses/.

-

Ferrari, Fabian, José van Dijck, and Antal van den Bosch. “Observe, Inspect, Modify: Three Conditions for Generative AI Governance.” New Media & Society 27, no. 5 (2025): 2788–2806. https://doi.org/10.1177/14614448231214811.

-

Flaherty, Colleen. “The Digital Divide: Student Generative AI Access.” Inside Higher Ed. Last modified April 21, 2025. https://www.insidehighered.com/news/tech-innovation/artificial-intelligence/2025/04/21/half-colleges-dont-grant-students-access.

-

George Fox University Library. “Generative AI for Library Research.” George Fox University Library Guides. Accessed November 12, 2025. https://libguides.georgefox.edu/c.php?g=1391141\&p=10290454.

-

Georgetown Law Institute for Technology Law & Policy. “Tech Brief: AI Sycophancy & OpenAI.” Georgetown Law. Last modified July 30, 2025. https://www.law.georgetown.edu/tech-institute/insights/tech-brief-ai-sycophancy-openai-2/.

-

Georgetown University Library. “WRIT 1150 Library Toolkit: Evaluating AI-Generated Content.” Georgetown Library Guides. Accessed November 12, 2025. https://guides.library.georgetown.edu/writ1150toolkit/evaluatingai.

-

Ghazi, Sahar Habib. “Can You Get Emotionally Dependent on ChatGPT?” Greater Good Magazine. Last modified July 23, 2025. https://greatergood.berkeley.edu/article/item/can_you_get_emotionally_dependent_on_chatgpt.

-

Gonsalves, Chahna. “Generative AI’s Impact on Critical Thinking: Revisiting Bloom’s Taxonomy.” Journal of Marketing Education 0, (2024): 4-19. https://doi.org/10.1177/02734753241305980.

-

Grajek, Susan, Kathe Pelletier and Austin Freeman. “AI Procurement in Higher Education: Benefits and Risks of Emerging Tools.” EDUCAUSE Review, March 11, 2025. https://er.educause.edu/articles/2025/3/ai-procurement-in-higher-education-benefits-and-risks-of-emerging-tools.

-

Green, Brian Patrick. 2020. “Artificial Intelligence and Ethics: Sixteen Challenges and Opportunities.” Santa Clara Markkula Center for Applied Ethics. Last modified August 18, 2020. https://www.scu.edu/ethics/all-about-ethics/artificial-intelligence-and-ethics-sixteen-challenges-and-opportunities/.

-

Gundersen, Odd Erik and Sigbjørn Kjensmo. “State of the Art: Reproducibility in Artificial Intelligence.” Proceedings of the AAAI Conference on Artificial Intelligence 32 no.1 (2018): 1644-1651. https://doi.org/10.1609/aaai.v32i1.11503.

-

Henry, Geneva. “Research Librarians as Guides and Navigators for AI Policies at Universities,” Research Library Issues, no. 299 (2019): 47-64. https://publications.arl.org/rli299/49.

-

Henshall, Will. “The Billion-Dollar Price Tag of Building AI.” Time. Last modified June 3, 2024. https://time.com/6984292/cost-artificial-intelligence-compute-epoch-report/.

-

Hervieux, Sandy and Amanda Wheatley. “Building an AI Literacy Framework: Perspectives from Instruction Librarians and Current Information Literacy Tools.” A Choice White Paper, 2024. https://www.choice360.org/wp-content/uploads/2024/08/TaylorFrancis_whitepaper_08.28.24_final.pdf.

-

Hibbert, Melanie, Elana Altman, Tristan Shippen, and Melissa Wright. “A Framework for AI Literacy.” EDUCAUSE Review. Last modified June 3, 2024. https://er.educause.edu/articles/2024/6/a-framework-for-ai-literacy.

-

Huang, Yuxuan et al. “Deep Research Agents: A Systematic Examination and Roadmap.” Preprint arXiv. June 22, 2025. https://arxiv.org/html/2506.18096v2.

-

International Coalition of Library Consortia (ICOLC). “ICOLC Statement on AI in Licensing.” Last modified March 22, 2024. https://icolc.net/statements/icolc-statement-ai-licensing.

-

Jollimore, Troy. “I Used to Teach Students. Now I Catch ChatGPT Cheats.” The Walrus, Last modified March 5, 2025. https://thewalrus.ca/i-used-to-teach-students-now-i-catch-chatgpt-cheats/.

-

Kandemir, Mahmut. “Why AI Uses So Much Energy—and What We Can Do About It.” Penn State Institute for Energy and the Environment. Last modified November 20, 2025. https://iee.psu.edu/news/blog/why-ai-uses-so-much-energy-and-what-we-can-do-about-it.

-

Lee, Hao-Ping (Hank) et al. “The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers.” Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems No. 1121 (2025): 1–22. https://doi.org/10.1145/3706598.3713778.

-

Lee, Keun-woo et al. “AI Literacy: A Framework to Understand, Evaluate, and Use Emerging Technology.” Digital Promise. Last modified June 18, 2024. https://digitalpromise.org/2024/06/18/ai-literacy-a-framework-to-understand-evaluate-and-use-emerging-technology/.

-

Li, Xiaojian et al. “AI Awareness.” Preprint arXiv. April 25, 2025. https://arxiv.org/pdf/2504.20084v1.

-

Lo, Leo S. “AI Literacy: A Guide for Academic Libraries.” College & Research Libraries News 86, no. 3 (2025). https://crln.acrl.org/index.php/crlnews/article/view/26704/34626.

-

Lopez, Allie. “Saying No to AI in Education.” Front Porch Republic (blog). Last modified December 20, 2024. https://www.frontporchrepublic.com/2024/12/saying-no-to-ai-in-education/.

-

Luther College Library. “Preus Library’s AI Literacy Guide.” Luther College Library Guides. Accessed November 12, 2025. https://guides.luther.edu/c.php?g=1476230\&p=10994683.

-

Madhusoodanan, Jyoti. “Can Artificial Intelligence Learn the Nuances of Human Humor?” Smithsonian Magazine. Last modified July 28, 2025. https://www.smithsonianmag.com/innovation/can-artificial-intelligence-learn-the-nuances-of-human-humor-180987052/.

-

Marrone, Rebecca, David Cropley, and Kelsey Medeiros. “How Does Narrow AI Impact Human Creativity?” Creativity Research Journal 36, no.1 (2026): 150-160. doi:10.1080/10400419.2024.2378264.

-

Méndez-Suárez, Mariano, Maja Ćukušić, and Ivana Ninčević-Pašalić. “AI FoMO (Fear of Missing Out) in the Workplace.” Technology in Society 84, (2026). https://doi.org/10.1016/j.techsoc.2025.103052.

-

Miller, Carrie. “The Real Environmental Footprint of Generative AI: What 2025 Data Tell Us.” Online Learning Consortium. Last modified December 4, 2025. https://onlinelearningconsortium.org/olc-insights/2025/12/the-real-environmental-footprint-of-generative-ai/.

-

Miller, Katharine. “Privacy in an AI Era: How Do We Protect Our Personal Information?” Stanford HAI. Last modified March 18, 2024. https://hai.stanford.edu/news/privacy-ai-era-how-do-we-protect-our-personal-information.

-

MIT Sloan Teaching & Learning Technologies. “When AI Gets It Wrong: Addressing AI Hallucinations and Bias.” MIT Sloan Teaching & Learning Technologies. Accessed November 12, 2025. https://mitsloanedtech.mit.edu/ai/basics/addressing-ai-hallucinations-and-bias/.

-

Mollick, Ethan. “Giving your AI a Job Interview.” Last modified November 11, 2025. https://www.oneusefulthing.org/p/giving-your-ai-a-job-interview.

-

Murray, Seb. “Study: Generative AI Results Depend as Much on User Prompts as on Models.” MIT Management Sloan School. Last modified August 4, 2025. https://mitsloan.mit.edu/ideas-made-to-matter/study-generative-ai-results-depend-user-prompts-much-models.

-

Nartey, Josephine. “AI Job Displacement Analysis (2025-2030).” SSRN. Last modified June 30, 2025. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5316265.

-

Nazarbayev University Library. “Artificial Intelligence Literacy: Basics.” Nazarbayev University Library Guides. Accessed November 12, 2025. https://nu.kz.libguides.com/artificial_intelligence/promptengineering.

-

Ng, Bei Yi et al. “Analyzing Security and Privacy Challenges in Generative AI Usage Guidelines for Higher Education.” Preprint, arXiv. June 25, 2025. https://doi.org/10.48550/arXiv.2506.20463.

-

Nsirim, Onyema. “Hallucinations in Artificial Intelligence and Human Misinformation: Librarians’ Perspectives on Implications for Scholarly Publication.” Folia Toruniensia 25 (2025): 79–98. https://doi.org/10.12775/FT.2025.004.

-

O’Donnell, James, and Casey Crownhart. “We Did the Math on AI’s Energy Footprint. Here’s the Story You Haven’t Heard.” MIT Technology Review. May 20, 2025. https://www.technologyreview.com/2025/05/20/1116327/ai-energy-usage-climate-footprint-big-tech/.

-

Oladokun, Bolaji, et al. “ChatGPT and library users: AI risks of hallucinations and misinformation.” Cybrarians Journal, no. 76. (2025): 25-37. https://journal.cybrarians.info/index.php/cj/article/view/642.

-

Palumbo, Elizabeth. 2025. “A Student’s Right to Refuse Generative AI.” Refusing Generative AI in Writing Studies (blog). August 29, 2025. https://refusinggenai.wordpress.com/2025/08/29/a-students-right-to-refuse-generative-ai/.

-

Park, Christine. “AI v. AI v. AI.” RIPS Law Librarian Blog (blog). January 17, 2023. https://ripslawlibrarian.wordpress.com/2023/01/17/ai-v-ai-v-ai/.

-

Quay-de la Vallee, Hannah, and Maddy Dwyer. “Students’ Use of Generative AI: The Threat of Hallucinations.” Center for Democracy and Technology. Last modified December 18, 2023. https://cdt.org/insights/students-use-of-generative-ai-the-threat-of-hallucinations/.

-

Qi, Tao et al. “Evidencing Unauthorized Training Data from AI Generated Content using Information Isotopes.” Preprint, arXiv. March 24, 2025. https://arxiv.org/html/2503.20800v1.

-

Resnik, David B., and Mohammad Hosseini. “Disclosing Artificial Intelligence Use in Scientific Research and Publication: When Should Disclosure Be Mandatory, Optional, or Unnecessary?” Accountability in Research (2025): 1-13. https://doi.org/10.1080/08989621.2025.2481949.

-

Roshanaei, Mahnaz, Rezvaneh Rezapour, and Magy Seif El-Nasr. “Talk, listen, connect: Navigating empathy in human-ai interactions.” Preprint, arXiv. October 25, 2025. https://arxiv.org/pdf/2409.15550.

-

Royce, Christine Anne. “To Think or Not Think: The Impact of AI on Critical Thinking Skills.” NSTA (blog). March 10, 2025. https://www.nsta.org/blog/think-or-not-think-impact-ai-critical-thinking-skills?srsltid=AfmBOopr7vd6sNaFMw2jk02D425epWnu4IeX2VJSmUHm-Qy7Jg20tjk7.

-

Ruwitch, John. “Moltbook Is the Newest Social Media Platform — But It’s Just for AI Bots.” NPR. Last modified February 4, 2026. https://www.npr.org/2026/02/04/nx-s1-5697392/moltbook-social-media-ai-agents.

-

Santa Clara University Library. “Generative AI.” SCU Library Guides. Accessed November 12, 2025. https://libguides.scu.edu/generativeai/evaluation.

-

Shao, Anqi. “New Sources of Inaccuracy? A Conceptual Framework for Studying AI Hallucinations.” Harvard Kennedy School Misinformation Review. August 27, 2025. https://misinforeview.hks.harvard.edu/article/new-sources-of-inaccuracy-a-conceptual-framework-for-studying-ai-hallucinations/.

-

Song, NaYoung. “Higher education crisis: Academic misconduct with generative AI.” Journal of Contingencies and Crisis Management, 32, no. 1 (2024): 1-3. https://doi.org/10.1111/1468-5973.12532.

-

Spennemann, Dirk H. R. “The Origins and Veracity of References ‘Cited’ by Generative Artificial Intelligence Applications: Implications for the Quality of Responses.” Publications 13, no. 1 (2025): 1-23. https://doi.org/10.3390/publications13010012.

-

Stout, Dustin W. “Top Challenges in AI Tool Interoperability.” MagaI (blog). November 12, 2025. https://magai.co/top-challenges-in-ai-tool-interoperability/.

-

SUNY Potsdam Library. “AI Ethics.” SUNY Potsdam Library Guides. Accessed November 12, 2025. https://library.potsdam.edu/AI_Ethics.

-

Sutaria, Niral. “Bias and Ethical Concerns in Machine Learning.” ISACA Journal 4, (2022). https://www.isaca.org/resources/isaca-journal/issues/2022/volume-4/bias-and-ethical-concerns-in-machine-learning.

-

The Chicago School University Library. “Artificial Intelligence (AI) Tools and Resources.” Library Guides. Accessed November 12, 2025. https://library.thechicagoschool.edu/artificialintelligence/ailiteracy.

-

Treiman, Lauren S., Chien-Ju Ho and Wouter Kool. “The consequences of AI training on human decision-making.” Proceedings of the National Academy of Sciences 121, no. 33 (2024): 1-12. https://doi.org/10.1073/pnas.2408731121.

-

University of Illinois Urbana-Champaign Library. “Introduction to Generative AI.” UIUC Library Guides. Accessed November 12, 2025. https://guides.library.illinois.edu/generativeAI.

-

University of Nevada, Reno Library. “AI in Research & Teaching.” UNR Library Guides. Accessed November 12, 2025. https://guides.library.unr.edu/generative-ai/literacy.

-

University of San Diego Legal Research Center. “Generative AI Detection Tools.” USD Legal Research Center Research Guides. Accessed November 12, 2025. https://lawlibguides.sandiego.edu/c.php?g=1443311\&p=10721367.

-

U.S. Department of Homeland Security. Increasing Threat of Deepfake Identities. Washington, DC: DHS, 2025. https://www.dhs.gov/sites/default/files/publications/increasing_threats_of_deepfake_identities_0.pdf.

-

Vipra, Jai and Sarah Myers West. “Computational Power and AI.” AI Now Institute. September 27, 2023. https://ainowinstitute.org/publications/compute-and-ai.

-

Wichita State University, AI ChatGPT-4, Bing, and Bard. “The Transformative Effects of AI on Jobs and Education.” Wichita State University. Accessed November 14, 2025. https://www.wichita.edu/services/mrc/OIR/AI/jobimpacts.php.

-

Yu, Bin. “After Computational Reproducibility: Scientific Reproducibility and Trustworthy AI.” Harvard Data Science Review 6, no. 1 (2024). https://doi.org/10.1162/99608f92.ea5e6f9a.

IV. AID Statements

Jaclyn Parrott drafted content for I. Overview of Challenges, II. Specific Challenges: Governance, Academic Integrity (Plagiarism, Source Attribution, and AI Detection Tools), Final Thoughts, III. Resources: Additional Reading Resources, and Slides 1-5, 9, and 13-30. David Carson drafted content for II. Specific Challenges: Hallucinations, AI Competency, Cognitive Off-Loading, Evaluating Content, and AI Tool Accessibility and the Digital Divide, and Slides 6-8 and 10-12. III. Resources: References are based on all content.

Jaclyn Parrott AID Statement: Artificial Intelligence Tool: Institutional versions of Gemini 3 Flash and M365 Copilot utilized January-April 2026; Visualization: Prompted Gemini 3 Flash and M365 Copilot to generate various images for the presentation slides. Most of the images generated by AI took several prompt iterations in order to more accurately reflect the content that the images refer to. Data Collection Method: Prompted M365 Copilot to develop AI model comparison charts; prompted institutional version Gemini 3 and free versions of Grok 4.1, Claude Sonnet 4.6, and ChatGPT 5.2 to answer questions about their systems in the Overview of Challenges section; prompted M365 Copilot to answer if it plagiarizes in the Plagiarism section.

David Carson AID Statement: Artificial Intelligence Tool: Google Gemini 3 and M365 Copilot (institutional instance) used January-February 2026; Writing—Review & Editing: Reviewed language for clarity and grammar.